Microsoft Foundry: The AI Platform Most People Haven’t Heard About Yet

When people talk about AI today, the conversation almost always starts with products.

You hear names like ChatGPT, Gemini, Claude, or Microsoft Copilot.

And honestly, they’re incredible products.

They help us write documents faster, summarize meetings, generate code, brainstorm ideas, and automate everyday tasks. They’re polished, accessible, and designed to boost individual productivity right out of the box.

But there’s a huge distinction that often gets overlooked.

These tools are AI as a product.

What many organizations are actually looking for is something very different:

AI as a platform.

And that’s where Microsoft Foundry enters the picture.

AI Product vs AI Platform

Think about it this way.

Using ChatGPT is like driving a finished car.

Using Microsoft Foundry is like owning the factory, the assembly line, and the engineering toolkit that lets you build vehicles specifically for your business.

One is optimized for general usage.

The other is designed for customization, orchestration, integration, governance, and scale.

That distinction matters more than ever.

Because most enterprises today are no longer asking:

“How can employees use AI?”

They’re asking:

“How can we embed AI into our business itself?”

That changes everything.

The Problem with "Just Use ChatGPT"

Many organizations start their AI journey with consumer AI tools.

And that makes sense.

Teams experiment with prompts. Developers prototype quickly. Productivity improves immediately.

But eventually, limitations start appearing.

Questions begin to surface like:

- How do we connect AI to our internal systems?

- Can AI access our company knowledge securely?

- How do we control permissions and governance?

- What model should we use for each workload?

- How do we orchestrate agents together?

- How do we monitor cost and performance?

- How do we integrate AI into existing applications?

A concrete example: Imagine a bank that wants to answer customer questions using internal policy documents and transaction history. ChatGPT alone can't securely access those systems, enforce compliance rules, or route requests through the bank's existing infrastructure. That's when organizations realize they need something more than a product — they need a platform.

At that point, the conversation shifts from:

“Which AI tool should we use?”

to:

“How do we build AI-powered systems?”

That’s the moment when platforms become more important than products.

So What Exactly Is Microsoft Foundry?

Microsoft Foundry is Microsoft’s enterprise AI platform designed to help organizations build, orchestrate, deploy, and govern AI-powered applications and agents.

It’s not just a chatbot.

It’s an AI engineering ecosystem.

At a high level, Foundry provides capabilities such as:

- Access to multiple AI models

- Agent orchestration

- Prompt flows and workflow pipelines

- Enterprise security and governance

- Knowledge grounding and retrieval

- Integration with existing enterprise systems

- Observability and evaluation

- AI application lifecycle management

This is a very different mindset from opening a browser and chatting with an AI assistant.

Foundry is about embedding intelligence directly into business processes and applications.

Why This Matters Architecturally

This is the part that gets especially interesting for architects and engineers.

For years, enterprise systems were built around deterministic logic.

Input goes in. Rules execute. Output comes out.

AI changes that architecture completely.

Now we’re designing systems where:

- Reasoning becomes part of the application flow

- Multiple models collaborate together

- Agents make contextual decisions

- Retrieval systems dynamically inject knowledge

- Natural language becomes an interface layer

- Workflows become probabilistic rather than fully deterministic

Traditional application architecture starts evolving into AI-native architecture.

And platforms like Microsoft Foundry are designed specifically for this transition.

What Actually Makes an AI Agent?

This is where things become interesting.

When people hear the word “AI Agent,” they often imagine a single intelligent chatbot.

But in reality, enterprise AI agents are composed of multiple architectural components working together.

An agent is not just a model.

It’s an entire system.

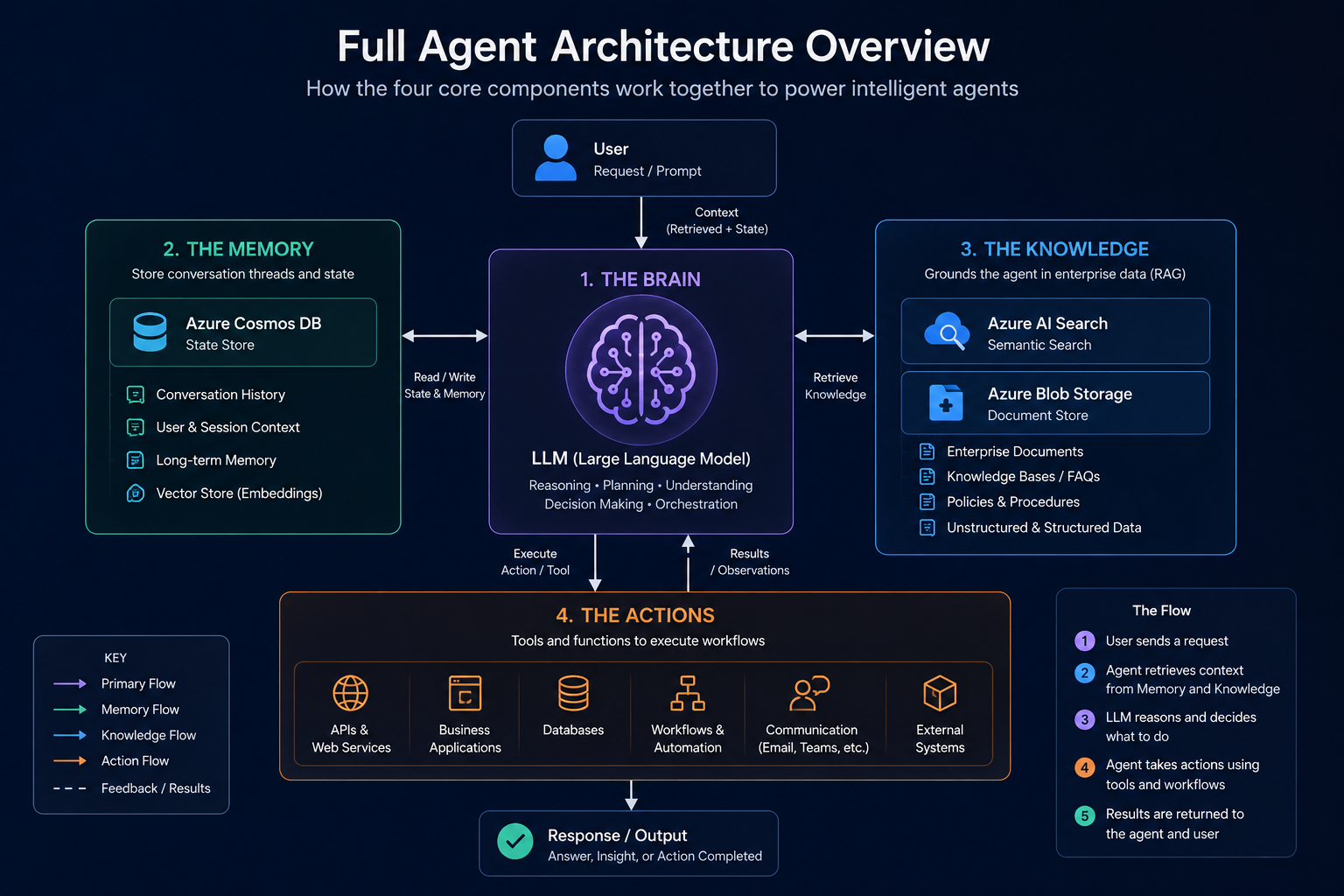

At a high level, modern AI agents usually consist of four major components:

1. The Brain — Models

This is the reasoning engine.

The Large Language Model (LLM) interprets intent, generates responses, makes decisions, and orchestrates reasoning workflows.

And this is no longer limited to a single model provider.

Modern AI platforms increasingly support a multi-model ecosystem including:

- OpenAI

- Anthropic

- Meta

- Mistral AI

- Cohere

- DeepSeek

This is one of the key ideas behind Microsoft Foundry.

The platform is designed to give organizations flexibility in choosing the right model for the right workload rather than locking into a single provider.

This matters because different models excel at different tasks.

Some are optimized for:

- Reasoning

- Coding

- Summarization

- Multimodal understanding

- Low latency

- Cost efficiency

Enterprise AI architecture is quickly becoming a model orchestration problem, not simply a single-model problem.

2. The Memory — State & Conversation History

Agents need memory.

Without memory, every interaction becomes stateless and disconnected.

Enterprise agents often require:

- Conversation history

- User context

- Session state

- Long-term memory

- Semantic retrieval memory

This is where databases become critical.

Services like Azure Cosmos DB play an important role by providing:

- Low-latency global scale

- Stateful conversation storage

- Vector search capabilities

- High-throughput workloads

In AI-native systems, databases are no longer just persistence layers.

They become cognitive memory systems.

This is a major architectural shift.

Traditional applications stored records.

AI-native applications increasingly store:

- Context

- Embeddings

- Semantic relationships

- Conversation threads

- Agent state

Memory becomes part of intelligence itself.

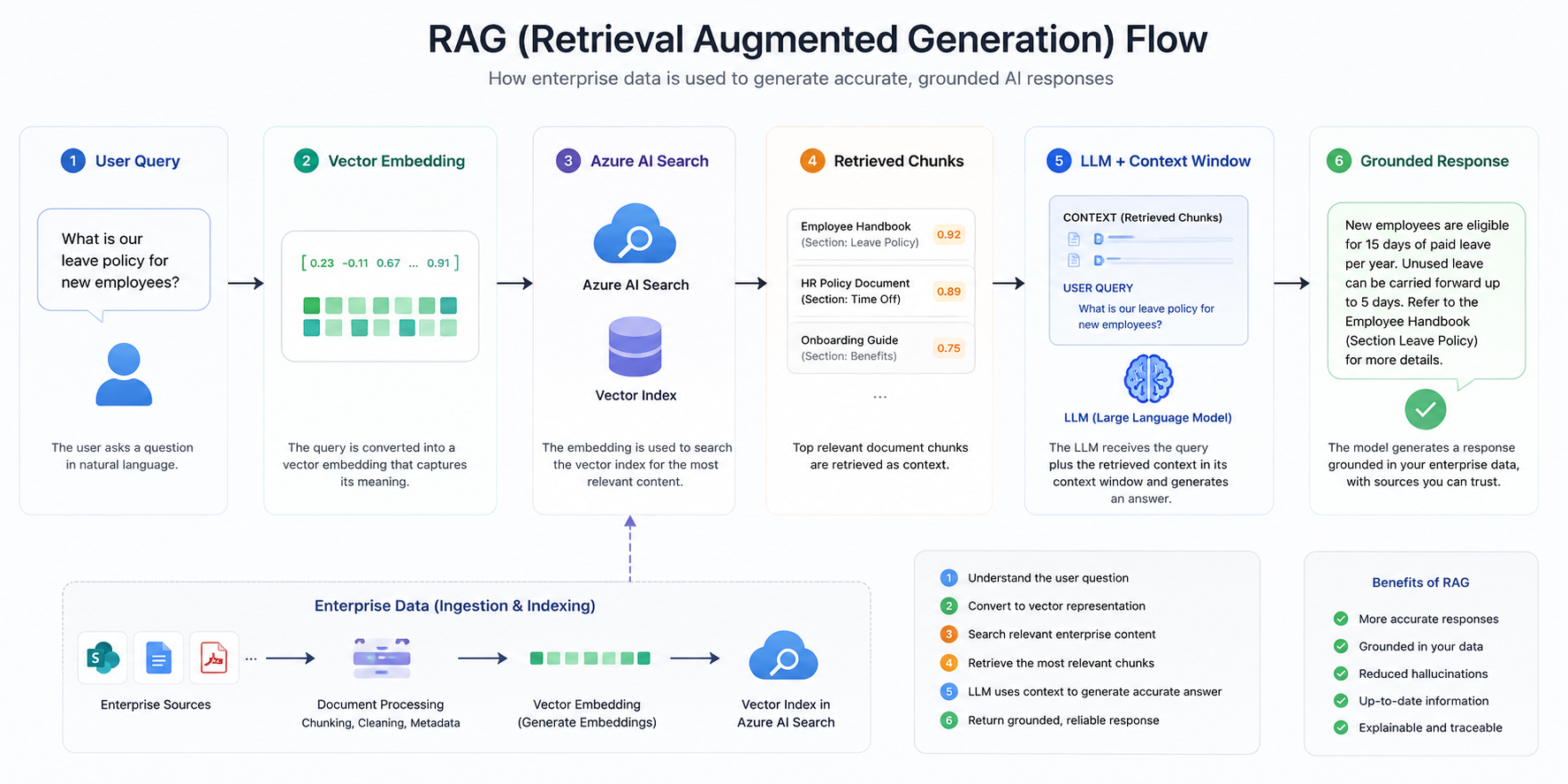

3. The Knowledge — Grounding with Enterprise Data

One of the biggest limitations of standalone AI models is that they only know what they were trained on.

Enterprise agents need access to proprietary organizational knowledge.

That introduces concepts like:

- Retrieval-Augmented Generation (RAG)

- Vector embeddings

- Semantic search

- Knowledge grounding

This is where services like:

- Azure AI Search

- Azure Blob Storage

become foundational building blocks.

Together, they allow agents to retrieve relevant enterprise knowledge dynamically before generating responses.

This dramatically improves:

- Accuracy

- Relevance

- Context-awareness

- Hallucination reduction

Without grounding, enterprise AI quickly becomes unreliable.

Because enterprise value does not come from generic internet knowledge.

It comes from:

- Internal documents

- Operational procedures

- Customer data

- Enterprise systems

- Organizational context

This is why RAG has become one of the most important patterns in modern AI architecture.

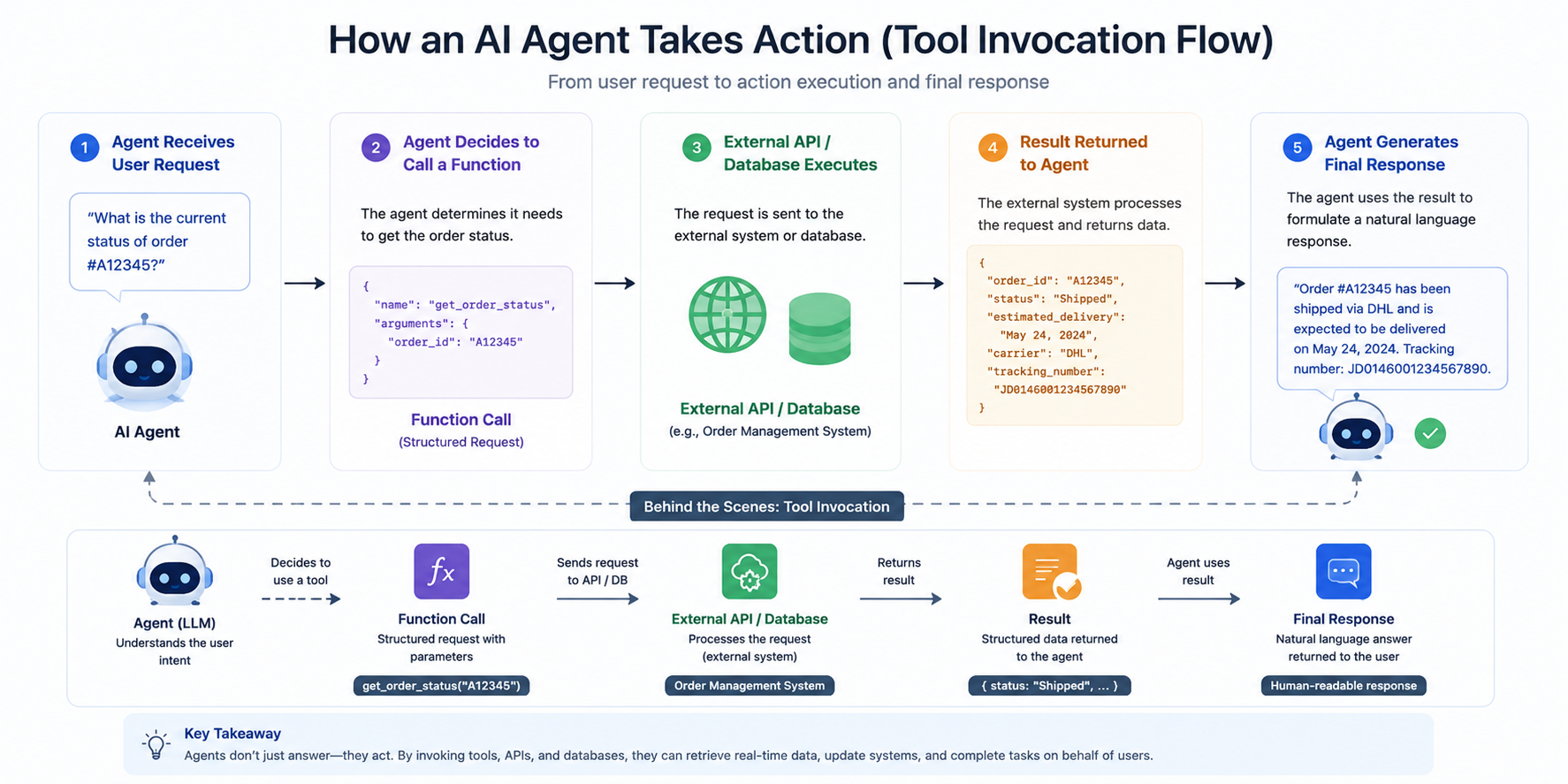

4. The Action Layer — Tools & Workflows

Reasoning alone is not enough.

Real enterprise agents need the ability to take action.

That means interacting with:

- APIs

- Databases

- Business systems

- Workflows

- External services

This is where tools and protocols become important.

Modern agent architectures increasingly rely on concepts like:

- Function calling

- Tool orchestration

- Workflow execution

- MCP (Model Context Protocol)

This transforms agents from passive assistants into active systems capable of executing real business operations.

For example, an enterprise AI agent may:

- Retrieve customer information

- Generate a report

- Trigger an approval workflow

- Create support tickets

- Query internal systems

- Send notifications

- Execute operational tasks

At this point, AI stops being a chatbot.

It becomes part of the enterprise execution layer itself.

This Is Why AI Platforms Exist

Once you understand these four layers, the role of platforms like Microsoft Foundry becomes much clearer.

Enterprise AI is no longer about a single chatbot UI.

It’s about orchestrating:

- Models

- Memory

- Knowledge

- Actions

- Security

- Governance

- Observability

- Scalability

into a unified AI-native architecture.

One of the biggest misconceptions right now is treating AI as a feature you bolt onto an existing product. In reality, AI is rapidly becoming infrastructure — similar to how cloud computing transformed application hosting, AI platforms are transforming application intelligence itself.

A few years from now, most enterprise applications will likely include:

- AI reasoning layers

- Agent orchestration

- Semantic retrieval

- Context memory

- Autonomous workflows

- Natural language interaction

And companies will need platforms capable of running these systems securely at scale. That’s the real role of Microsoft Foundry — not replacing ChatGPT, but enabling organizations to build their own AI-powered ecosystems.

Final Thoughts

Right now, the AI conversation in public still revolves heavily around consumer products.

ChatGPT. Gemini. Claude. Copilot.

And those tools absolutely deserve the attention they’re getting.

But beneath that surface, another transformation is happening quietly inside enterprises.

Organizations are beginning to realize that the real long-term value of AI is not simply using AI.

It’s building with AI.

That requires a platform.

And while many people still haven’t heard much about Microsoft Foundry yet, there’s a strong chance they will in the coming years.

Because the future of enterprise AI probably won’t be just a chatbot sitting in a browser tab.